The link to the github repo: https://github.com/vocdex/lerobot-draws

Our team put in a great deal of effort to assemble this final demo submission. In the end, it paid off! We were selected as one of the top creative teams in Munich as well as one of the #top30 winners from the worldwide LeRobot hackathon.

Here are some things we got RIGHT:

- We tried to come up with an original approach. No pick&place foundation robotic model demos. Moreover, we also tried to think about something fun and eye-catching.

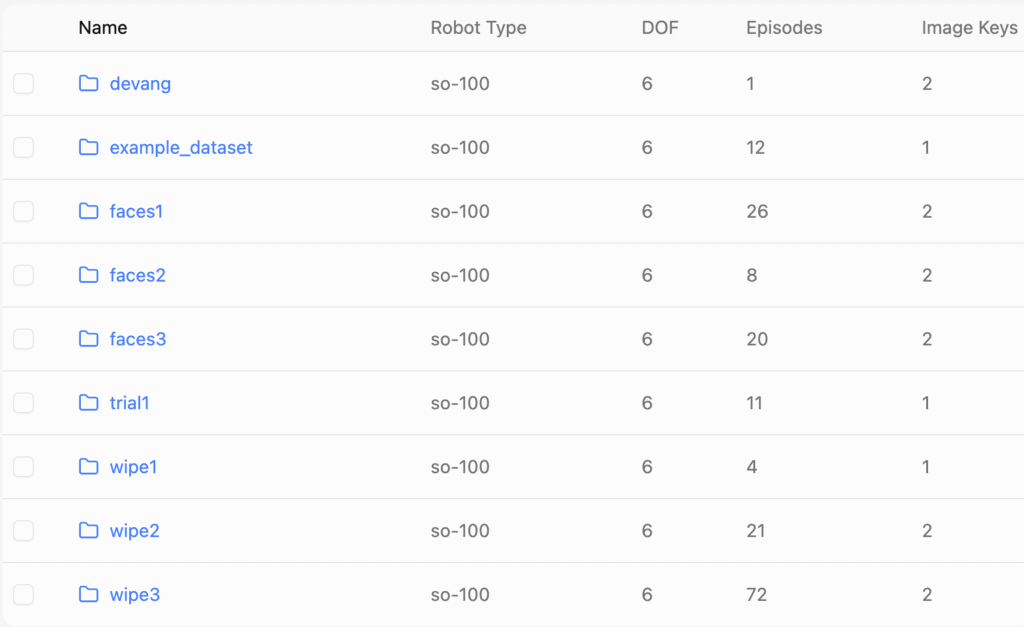

- We split the efforts of our team in order to minimize the risk of not being able to deliver a working demo. Thus, drawing, wiping, and interacting with the robot were engineered by different team members.

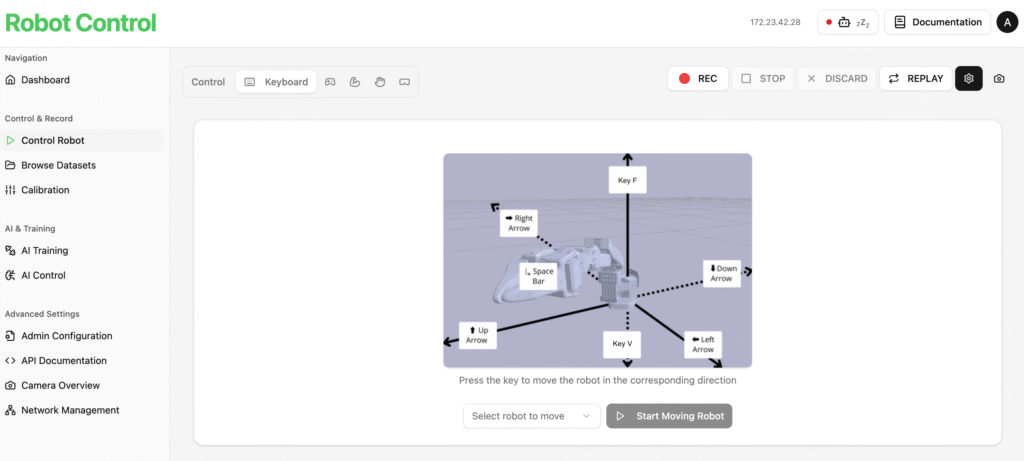

- We leveraged the Phosphobot app and its intuitive GUI in order to collect, train, and deploy datasets fast.

- We started collecting data from day one, which allowed us to test and rapidly define the limits of our idea and decide where best to allocate our efforts.

Now, even more importantly, where did we mess up? What lessons did we learn?

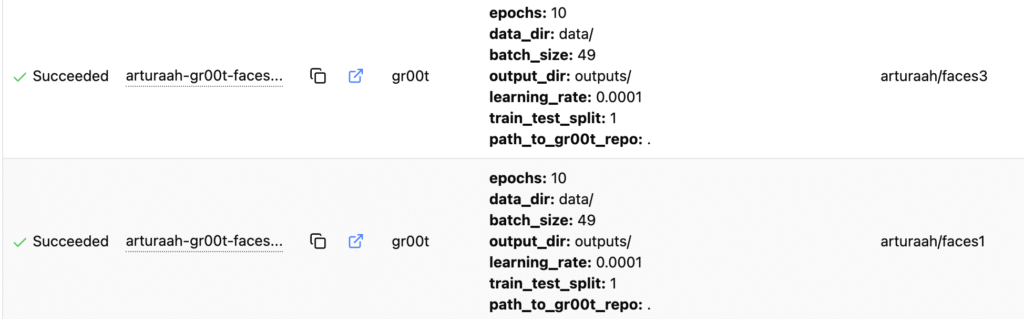

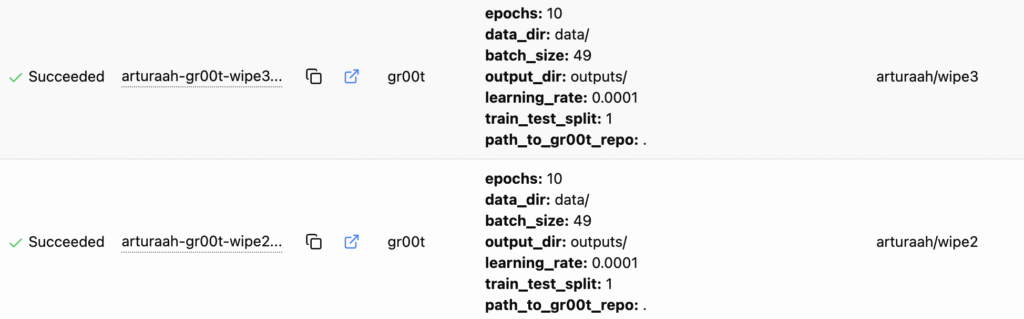

- Although we used a state-of-the-art model: gr00t N1, other foundation models could have better suited our needs. Specially considering the complexity and number of tasks to be executed by one robot, as well as the constraints in terms of time and compute resources we had. Click here if you want to know how to choose the best Robotics foundation model for your application as of June 29, 2025.

- Figuring out and implementing the inverse kinematics for the LeRobot sO-101 robot was very challenging. Using imitation learning from the start would have allowed us to reach further. Moreover, after the announcement of the hackathon winners, it was clear that imitation learning approaches were most in line with the expectations of the jury.

- Camera positioning played a big role when training our gr00t N1 model. When camera positioning and the object to be manipulated were placed in the same manner in training as well as inference, the model was able to successfully perform the task(even with as little as little as 8 episodes. Performance was consistent. On the other hand, when at least one of the cameras position relative to the robot was changed, even by a little bit, the robot failed. Object positioning, affected inference to a smaller degree, yet when the object was very far off(in any of the camera frames) from training data, the robot was also unable to achieve its goal.

We trained all our gr00t models with the same hyperparameters, following phosphobot settings. We assumed it was a better idea to follow the recommended settings from an experienced team rather than adding more variables to figure out in our 2-day hackathon. Nonetheless, it would have been interesting to adapt some of these to our task. Simply adjusting the epochs to our dataset could have resulted in drastically different results.

What did we learn from other teams?

- When thinking about camera setup, the goal is to provide the model with a holistic view of the entire setup. Proximity to the goal and the position of the robot and object should be captured from the camera’s viewpoints. Teams that did not respect these “rules” struggled to run successful inference. A good question to ask yourself when evaluating your setup is: “Can you successfully complete the task teleoperating the robot only watching the camera feeds?“

- Depth cameras were tested and used during the hackathon. Only one team managed to integrate depth into the training data of a deep learning model, showing that it is possible. They splitted the 3 rgb channels and depth channel to include them s two separate camera feeds into the model. Although they did not manage to show successful inference results due to time constraints, the approach is promising. It further enhances the model’s holistic view of the setup.

- Constant backgrounds helped model performance. Teams that used a uniform background were on average more successful than teams that did not pay attention to this detail.

- Many teams opted to use smolVLA(https://arxiv.org/pdf/2506.01844) instead of GR00T. The main arguments were:

- The model is smaller

- The model is better in instances where there are different tasks to be done(meaning different prompts involved in the episodes composing the training set).

At first glance, the claims appear to be true. Teams using smolVLA got on average better results with fewer episodes than teams using any other model. Compute possibilities were different among teams, and thus, it is not straightforward to compare training and inference times between models.

🧠 ✅Check out my post on model comparison if you want to read about whether these claims were supported by theory or not.

🤖 🔧 If you want to build your own SO-101 robot arms check out this blog post.

Leave a Reply