Can a VLM replace human annotators in human-in-the-loop systems?

vlm_in_the_loop_poster___final adlcv_examCan an AI Judge AI? Automating Data Annotation with VLMs

Data is the keystone of modern AI, but getting high-quality, labeled data is one of the biggest bottlenecks in computer vision. The traditional solution, having humans meticulously label or verify thousands of images, is slow, expensive, and doesn’t scale well.

For our Advanced Deep Learning project at the Technical University of Denmark (DTU), our team—German Buttiero, Artur Habuda, and Hassan Hotait—tried to tackled this problem. We asked a simple but powerful question: Can we replace the human in a “human-in-the-loop” annotation system with a powerful Vision-Language Model (VLM)?

The Problem: The Human Bottleneck

Human-in-the-Loop (HITL) systems are a popular middle ground. A computer vision model (like Mask R-CNN for object segmentation) makes predictions on new, unlabeled data. A human expert then quickly approves or rejects these predictions, creating a feedback loop that efficiently labels new data to retrain and improve the model.

While faster than full manual annotation, it still relies on a human for every decision. Our goal was to automate this final, crucial step.

Our Solution: The VLM-in-the-Loop

We designed a system where the human’s role is performed by a VLM. Here’s how it works:

- Train an Initial Model: We start by training a Mask R-CNN model on a small, initial set of labeled data (e.g., 20% or 40% of the full training set).

- Inference: The trained model predicts object segmentations on a pool of unlabeled images.

- VLM as the Judge: Instead of showing these predictions to a person, we feed them to a VLM. The VLM receives the image with the predicted segmentation masks overlaid and a prompt like: “Examine this image… The computer detected these objects: {list of objects}. Begin with ‘yes’ if both the object classes and segmentation masks are accurate.”

- Closing the Loop: The images that the VLM approves are added to our labeled dataset. The Mask R-CNN model is then retrained on this larger, richer dataset.

We repeat this cycle, allowing the model to progressively improve by learning from the data it was most confident about, as judged by our VLM.

The Experiment: VLM vs. a “Perfect” Human

To see if our approach worked, we pitted our VLM-Active Learning system against a Human-Simulated baseline. The “human” was an ideal oracle: it would only approve an image if the model’s predictions were nearly perfect (IoU > 0.6 for all objects, no false positives).

We measured two things:

- Performance: The model’s accuracy, measured by mean Average Precision (mAP).

- Annotation Time: The cumulative time cost, calculated based on how many images were verified in each iteration.

The plot above shows our results. The Y-axis is performance (higher is better), and the X-axis is annotation time in hours (lower is better). Each colored line represents a different experiment, starting with a different amount of initial data (e.g., VLM-Active 20% started with 20% of the data). The numbers (0, 1, 2, 3, 4) on each line mark the performance after each iteration of the loop.

Key Findings:

Our results were very promising.

- VLM is Highly Competitive: As you can see from the plot, the VLM-powered systems (green and black lines) consistently reached performance levels comparable to, and in some cases exceeding, the human-simulated baselines. Crucially, they often did so in significantly less annotation time.

- The System Gets Smarter: A key trend we observed was that in later iterations, both the VLM and the simulated human approved fewer new images. This is exactly what you want to see in an active learning system! As the model improves, it becomes more confident, and fewer “easy” or “informative” examples are left in the unlabeled pool.

- More Data, More Training: We implemented an adaptive epoch schedule, allowing the model to train for longer as the dataset grew. This was a critical adjustment from our initial experiments and ensured that the model had enough time to learn from the new data it was receiving.

The tables from our experiment quantify this success. In one run (VLM-Active 0.8), our system added 167 new images in the first iteration, but only 20 in the last, showcasing the effectiveness of the learning process. We also saw concrete improvements across classes, with the mAP for “Zebra” detection, for example, jumping from 0.615 to 0.715.

Challenges & A Fully Automated Detour

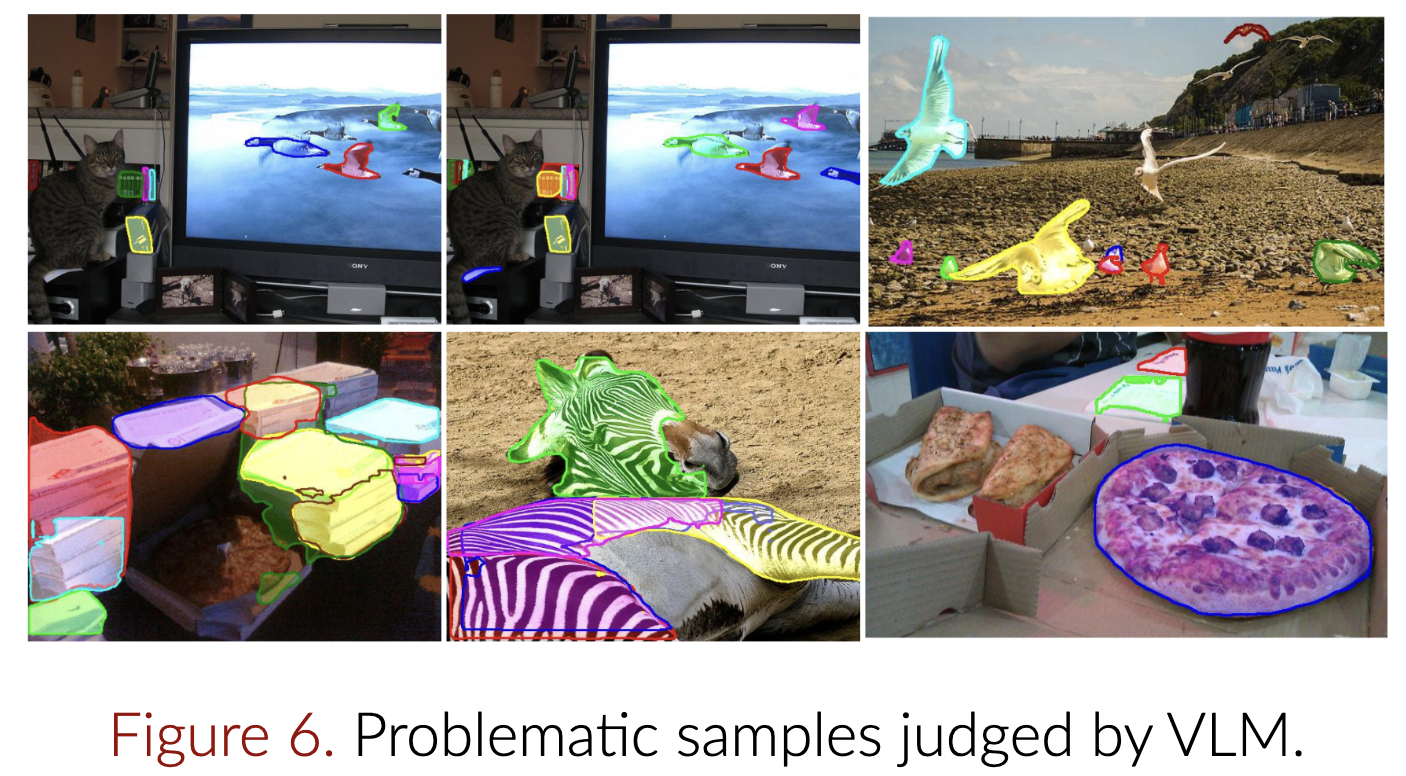

We found that the VLM, while powerful, sometimes struggled with images containing many small, clustered objects.

We also took a detour to explore the ideal setup: a fully automated system with zero initial labels. We used a powerful model called Grounded-SAM2 to generate “pseudo-labels” for our entire dataset and then trained a Mask R-CNN on them. While fascinating, the quality of these automated labels wasn’t high enough, leading to a model with a mAP of 0.49, significantly lower than the 0.65 mAP from a fully human-supervised model. It was a valuable lesson in the current limits of zero-shot transfer learning.

The Verdict

So, can a VLM replace a human annotator in the loop? Our findings suggest a strong “yes.”

Our VLM-in-the-loop system proved to be a powerful and efficient alternative to traditional human-based verification. It successfully guided the learning process of a segmentation model, achieving high performance while dramatically cutting down on the required annotation time. While not a perfect replacement in every scenario, this approach demonstrated the possibility of creating more scalable, efficient, and automated data pipelines for computer vision.

Our GitHub repo: https://github.com/GermanButtiero/vlm-in-the-loop

Leave a Reply